The History of Radiation

April 5, 2015 | Mirion Technologies

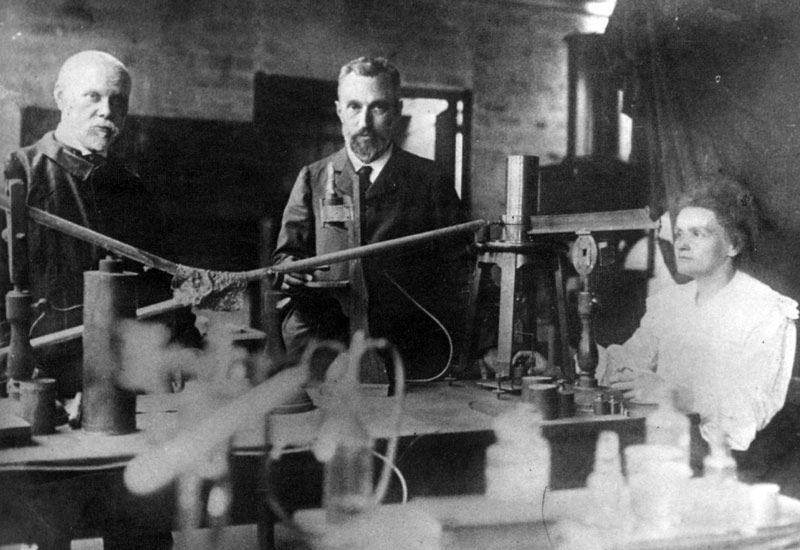

MARIE CURIE, HENRI BECQUEREL, WILHELM RÖNTGEN

The modern understanding of ionizing radiation got its start in 1895 with Wilhelm Röntgen. In the process of conducting various experiments in applying currents to different vacuum tubes, he discovered that, despite covering one in a screen to block light, there seemed to be rays penetrating through to react with a barium solution on a screen he’d placed nearby. After several experiments, including taking the first photo (of his wife’s hand and skeletal structure) with the new rays, he named them “X-Rays” temporarily as a designation of something unknown, and the name stuck.

“It seemed at first a new kind of invisible light. It was clearly something new, something unrecorded...” - WILHELM RÖNTGEN

This discovery was followed in 1896 by Henri Becquerel’s discovery that uranium salts gave off similar rays naturally. Though originally thinking that the rays were given off by phosphorescent uranium salts after prolonged exposure to the sun, he eventually abandoned this hypothesis. Through further experimentation including non-phosphorescent uranium, he instead came to recognize that it was the material itself that gave off the rays.

Although it was Henri Becquerel that discovered the phenomenon, it was his doctoral student, Marie Curie, who named it: radioactivity. She would go on to do much more pioneering work with radioactive materials, including the discovery of additional radioactive elements: thorium, polonium, and radium. She was awarded the Nobel Prize twice, once alongside Henri Becquerel and her husband Pierre in Physics for their work with radioactivity, and again years later in Chemistry for her discovery of radium and polonium. She also conducted pioneering work in radiology, developing and deploying mobile X-ray machines for the battlefields of World War I.

“We must not forget that when radium was discovered no one knew that it would prove useful in hospitals. The work was one of pure science. And this is a proof that scientific work must not be considered from the point of view of the direct usefulness of it. It must be done for itself, for the beauty of science, and then there is always the chance that a scientific discovery may become like the radium, a benefit for mankind. ” - MARIE CURIE

She died in 1934 of aplastic anemia, probably developed from extended exposure to various radioactive materials, the dangers of which were only really understood long after most of her exposure had occurred. In fact, her papers (and even her cookbook) are still highly radioactive and many are considered unsafe to handle, stored in shielded boxes and requiring protective equipment to safely review.

RADIUM WATCH DIAL PAINTERS

One of the first major events to highlight the dangers of ionizing radiation was the case of the “Radium Girls,” workers whose job was painting watch dials with radium. Though there was enough suspicion of the effects of ionizing radiation for the management of the company to take precautions, they offered none to the actual workers painting the watch dials. Many of them would lick their brushes to properly shape them. Since the human body treats radium as calcium, it was then deposited in the bones and led to radiation sickness. It is unknown how many died from radiation exposure.

After five of the workers sued the company (United States Radium), and the ensuing publicity, the health risks of radiation exposure were brought to public attention. The public interest and the availability of a large sample set (up to 4000 people were employed at dial painters over the years) led to the first long-term study of radiation exposure. Finally ending in 1993, it provided a wealth of information on the long-term effect of radiation exposure. The case also provoked drastic changes in both the fields of workplace safety & liability, and the field of Health Physics, dealing with the health effects and safety issues involved in working with radioactive materials.

THE MANHATTAN PROJECT & THE COLD WAR

The Manhattan Project, the crash study undertaken during World War II to develop the first atomic bomb, led directly the second long term study of the effects of long-term radiation exposure, namely the study of the survivors of the bombs at Hiroshima and Nagasaki. The bombings, which killed more than 150,000 between them (with some estimates putting the total at closer to 245,000 or more), also left more than 600,000 survivors (hibakusha, literally “explosion affected people”), many who have been studied in the years since. Among the findings was that there does not appear to have been an increase of birth defects in those that survived the blasts. There have been, however, about 1900 cancer deaths that can be directly attributable to the bombings.

Since the creation and detonation of the atomic bombs ushered in the “Atomic Age,” much has changed in our understanding and implementation of radiation and radioactive material. Throughout the Cold War, there was experimentation on both sides into the properties and uses of radioactive material in various test reactors and related sites, looking to harness both the strategically valuable offensive power of radioactive material for nuclear weapons and the potentially valuable uses in other fields such as medicine, radiography, and others.